For business enterprises shifting to cloud platforms, going to the cloud offers a number of benefits and makes innovation happen faster. Working on the cloud removes barriers to innovation in many ways.

Cloud technology makes processes cheaper, easily scalable and flexible. It provides businesses flexible capacity for data storage and dissemination which is not easily done with physical data centres. Cloud technology offers massive scaling capabilities as enterprises can purchase more capacity whenever needed.

It removes capital investment such as having to invest in equipment, space, infrastructure, etc. Enterprises save on operating expenses such as installations, patches, software, maintenance, and firewalls, and by simply purchasing the necessary server space on the cloud and paying for what they use.

It offers greater speed and agility as there it removes the time and costs needed to set up a new project. It avoids huge losses when a project doesn’t work and needs to pivot or get dumped. A firm can move on to a new project faster which can also be scaled extremely quickly. It removes silos between teams in any location in the world and makes it easier for global collaboration to take place as different teams in different locations are updated with current information. In short, the cloud is here to stay.

Security Concerns and The Cloud

Although the cloud has many advantages, the tradeoff is that the cloud infrastructure is not directly under the enterprise’s control and enterprises need to account for new multi-cloud architecture and adopt the necessary security measures to protect their data. Enterprises on the cloud must be aware of the shared responsibility model of security, visibility issues on the cloud and other concerns.

Cloud Security Shared Responsibility Model

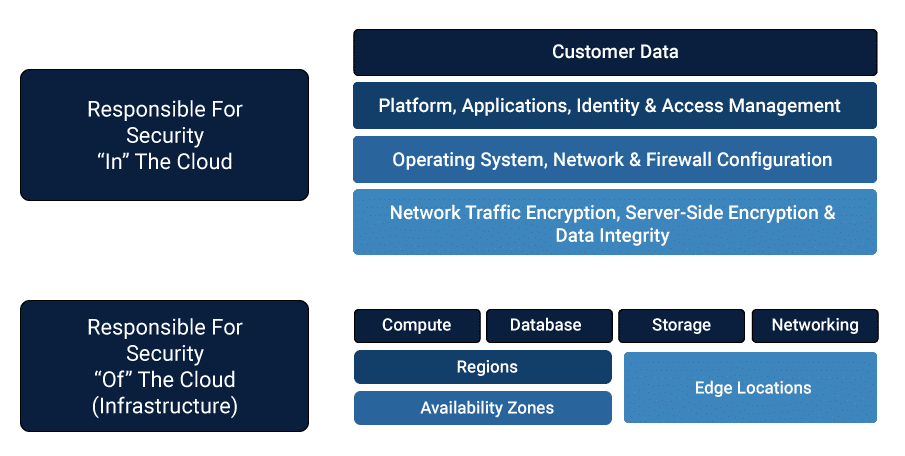

While cloud providers like AWS and GitHub provide security that is reliable, they do not cover the entire extent of security needed. Customers and users are also expected to shoulder the responsibility to secure their information within the cloud by closing data security loops.

Hence, cloud provider handles the security of the cloud but customers are responsible for security within the cloud. Cloud vendors like AWS, GitHub, Microsoft Azure, etc manage and control the physical security of their facilities, the host operating systems, and the virtualization layer.

Image 1: Shared Responsibility Model

However, within the cloud, customers must encrypt data-in-transit and at rest, they must configure and manage security controls for apps and the guest operating system. This is a shared responsibility model in which the cloud services providers and the users or customers themselves must all assume ownership of their respective security measures.

Visibility on Cloud Systems

Another major concern with cloud security is visibility. Businesses have different strategies when it comes to choosing clouds, with some using a multi-cloud strategy where they use different cloud providers, and others using a hybrid strategy by working on both public and private clouds. With such complex setups in place, moving to a cloud system creates complexities brought about by new application architectures. This means that businesses must find ways to monitor and secure services across these environments and this is what visibility is about.

A lack of visibility is a serious concern as it possibly hides security threats. Gaining visibility into data traffic and applications is important for businesses that move their workloads to these cloud systems. Lacking visibility can lead to network and application performance issues such as being unable to deliver on Service-Level Agreements, and outages.

Improved visibility can mean that enterprises can identify performance degradation, instances of data compromise, malicious traffic, and they can monitor traffic at links to the network. To gain holistic visibility to track and remedy such issues there needs to be continuous end-to-end cloud monitoring, in-depth traffic flow analysis over the network across applications and the entire service delivery infrastructure.

Other Security Concerns on the Cloud

Cloud technology is valuable for scaling products, applications and software, but the other face of the same coin is that minor issues can often scale into major problems. Enterprises also need to take sufficient steps taken so that internal ownership of security measures are well in place. Enterprises must be aware that there are cloud specific threats such as the public exposure of data because the cloud is online, unencrypted data like unencrypted S3 buckets, open security groups, compromised EC3 instances, visibility, and auditing.

Automated Remediation For AWS and GitHub Enterprises

Dealing with security concerns such is done through pre-cloud and in-cloud security measures that involve automated remediation of cloud-related threats. Using automated solutions allows security breaches to be addressed immediately and effectively. Manually managing security concerns across innumerable instances is not possible and can be prone to human error. Some of the ways in which cloud-specific threats are handled through such automated remediation are addressed with AWS and GitHub as examples.

AWS

Amazon Web Services takes responsibility for protecting the infrastructure that runs all of the services provided by the AWS Cloud. This consists of the software, hardware, networking, and other facilities that form part of the AWS Cloud services. Amazon Simple Storage Service (S3) makes web-scale cloud computing faster and easier for development teams and maximizes the benefits of scale.

However, AWS clearly states that it operates on a shared responsibility cloud model and customers must assume responsibility of securing their data once within the cloud. When working on AWS, some of the concerns to be taken into consideration are:

- S3 Bucket Exposure and Encryption of Data

- Port Inspection and Closure

- Ownership

- Instance Termination

S3 Bucket Exposure and Encryption of Data

When moving data and processes to the cloud, security measures can be taken ‘pre-cloud’ and ‘in-cloud’. Pre-cloud steps include setting up policies and processes that enforce encryption at rest. Encryption at rest comes into play to protect data at rest i.e. data that is stored and used infrequently and is protected by anti-virus software and firewalls. Encryption at rest can be carried out by setting in place system build processes, using hardened machine images and transparent encryption.

Hardened machine images work by reducing the vulnerability of virtual images through the process of hardening i.e. using pre-configured settings to automatically meet robust security benchmarks. This limits weaknesses that can make systems vulnerable to malicious cyber attacks. It can protect against unauthorized data access and other cyber threats.

Using transparent encryption or real-time encryption is another means of securing data. Data is automatically encrypted and decrypted during loading and saving in storage systems like RAIDs, servers, and SANs. These are ways in which automated remediation can be done pre-cloud.

Once in-cloud, appropriate automated measures should be undertaken periodically to create robust security. One of the problems of using a cloud system is that data and be automatically stored in an unencrypted manner due to default settings on the cloud. This can be automatically remedied by automating bucket encryption. Automatic remediation can also be used to immediately make a bucket private if an event has caused it to become public. A bucket can be made private if it has not been previously whitelisted and files can be scanned on an automatic basis to check new files to ensure they are encrypted according to data leakage compliance.

Other in-cloud automated remediation methods include using steganography for hiding data in audio, image and text files to protect it from attacks. Enterprises can also copy uploads to a separate cloud provider and create intracloud duplication. These are the different ways in which automated remediation can secure data in-cloud.

Port Inspection and Closure

Prior to moving to the cloud, enterprises depend on network security and firewalls. In the pre-cloud stage automated remediation can be used to ‘deny by default’ which means that unless action is specifically allowed or whitelisted, it is automatically denied. In a perimeter device like a firewall, an enterprise can define ports and protocols and turn everything else off.

Another pre-cloud measure is to use network change management to streamline configuration and network management and gain visibility into threats. By deploying network change management an enterprise can manage multiple policies, reduce human error, avoid duplication of work and streamline security deployment.

Within the cloud, security groups are associated with instances and provide security at the port access level. Security groups act as virtual firewalls for instances to control inbound and outbound traffic with a predetermined set of rules. However, users can accidentally or unknowingly open sensitive ports to the entire Internet and compromise security. Automated remediation can manage these events by locking down the ports by auto-correcting the security group.

Ownership

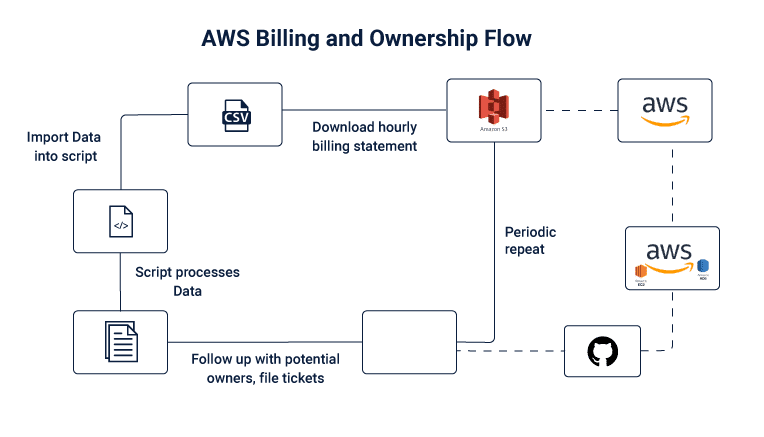

Before moving to the cloud it is easy to track ownership since enterprises know who owns servers and storage. This is not the case in the cloud as virtually anyone can spin up a server and allocate storage without sharing information. This creates a problem where no one knows about ownership in case of an incident. One way to manage this is by using AWS tags to track departmental ownership. ZocDoc has shared an AWS billing and ownership model that tracks ownership of servers and storage allocation responsibility.

Image 2: Flow of AWS Billing and Ownership

Enterprises can use automated remediation to track ownership via billing statements on the cloud by applying the following code. ZocDoc has publicly shared the entire code on GitHub which is linked further on.

Source Code Highlights I

#!/bin/bash

input=$1

# convert csv titles to valid sql column names

sed -e ‘1 s/[:/-]/_/g’ -e ‘1 s/$/2/g’ $1

> temp.csv

sqlite3 < processing.sql

Source Code Highlights II

.open cloudhealth-db.sqlite3

.mode csv

.import temp.csv cloudhealthtable

.mode tabs

.header on

.output s3_bucket_owners.txt

select distinct

lineItem_UsageAccountId,

lineItem_ResourceId,

resourceTags_user_Owner

from cloudhealthtable

where

lineItem_ProductCode = ‘AmazonS3’

;

Source Code Highlights III

.output ec2_owners.txt

select distinct

lineItem_UsageAccountId,

lineItem_ResourceId,

resourceTags_user_Owner

,

resourceTags_user_Creator,

resourceTags_user_Name,

resourceTags_user_Project

— more useful columns…

from cloudhealthtable

where

product_productFamily = ‘Compute Instance’

;

Instance Termination

In the case of cloud systems, tools and visibility are different from older and traditional storage and processing systems. Problems of visibility, scale and time are some of the major concerns when it comes to using the cloud. Using tools that can automatically detect the DNS lookup of a malicious hostname which can then be killed. Automated remediation enables instantaneous detection and response to malicious threats.

GitHub

GitHub Enterprise supports software development workflows and helps secure code from the ideation to production stage. When transitioning to the cloud via GitHub Enterprise some of the concerns that can arise are

- Public repositories getting exposed

- Repository Visibility and Auditing

- Committing to repository ownership.

Public Code Repositories Getting Exposed

Pre-Cloud security measures relied on on-premises code repositories such as Team Foundation Server and Git which provides source code management as well as reporting, testing, and other capabilities.

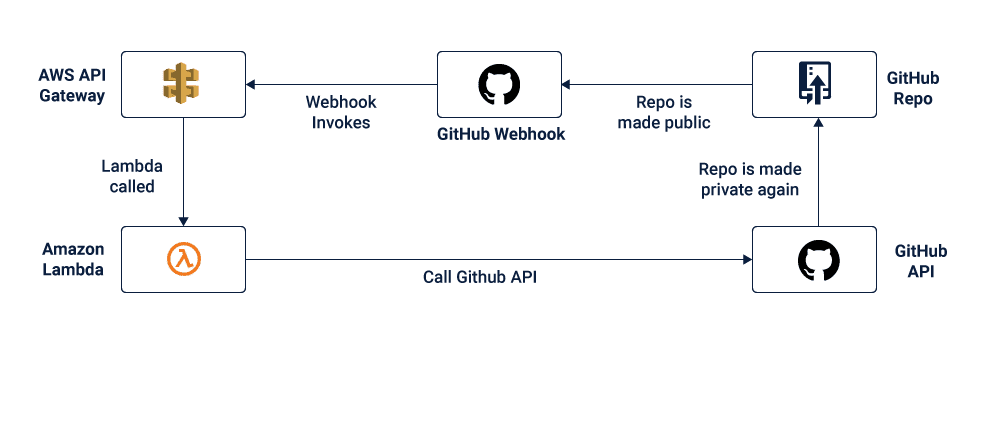

Once an enterprise has moved to the cloud, many enterprises rely on CodeCommit, GitHub, BitBucket and other source control services hosting Git-based repositories. A major security threat in using these is that the repository can be made public and a user may commit passwords and crypto keys accidentally, creating public exposure. Automated remediation can be used to convert public repositories to private.

Image 3: Automated remediation changing public repos to private

Repository Visibility and Auditing

Prior to moving to the cloud, on-premises code repositories with inbuilt auditing provided the necessary security measures such as auditing for data protection. An example of this is the version control system called Subversion. Development teams use it to maintain files such as documentation, web pages and source code in both their current and historical versions.

With cloud technology, repositories are easy to make and can be done by anyone. The problem arises with regard to visibility. It is necessary to have information about the repositories made, the access status of these repositories and the ownership of the repositories. Automated remediation allows enterprises to identify and track the creators and collaborators of repositories, as well as hooks, deploy keys and protected branches. This automation allows enterprises to manage several hundreds of repositories easily.

Committing to Repository Ownership

For avoiding and managing threats to the cloud, enterprises should have a team that is responsible for repositories. Security is a shared responsibility and InfoSec and Engineering can be involved in managing these repositories and assigning tickets.

Sharing responsibility means that developers can move between teams and work on several projects at a time. To manage repository access control enterprises can overload GitHub topics and name them according to the team to show ownership and avoid using GitHub’s team mechanism for repository access control which adds to operating expenses.

Managing Cloud-Specific Threats with Automated Remediation

Moving to the cloud has clear benefits for enterprises and it is a must for IT businesses that want to innovate. While working on the cloud offers clear benefits such as accelerating innovation, it comes with security concerns that must be addressed before the move to the cloud and during working on it.

With AWS and GitHub as examples, this article examined various security scenarios that an enterprise may face when moving to or working on the cloud. What is abundantly clear is that security is based on shared responsibility. While cloud providers provide ample security for the infrastructure and services themselves, it is up to users and customers to ensure that security within the cloud is taken care of as well.

This is done with automated remediation of security processes such as making buckets and repositories private, by blocking unknown network connections, terminating unknown instances, tracking ownership, etc. Using automated tools and processes to secure one’s information on the cloud allows enterprises to manage hundreds and thousands of repositories. This saves time and manual effort and saves enterprises from the cost of experiencing a complete data breach.

Code to create automated security measures can be found here for AWS and here for GitHub.

Must Reads:[wcp-carousel id=”10006″]