As enterprises strive to realize and identify the value in Big Data, many now seek more agile and capable analytic systems. Some of the key factors supporting this include improving customer retention, influence product development and quality and increasing operational efficiencies among others.

Organizations are looking to enhance their analytics workloads to drive several real-time cases such as recommendation engines, fraud detection and advertising analytics. In this whitepaper, I will try to draw your attention toward the applications of SMACK and how it has emerged as a crucial open source technology to handle high volume and velocity of “Big Data”.

Big Data is not only Big it’s Fast also

The way that big data gets big is through a continuous and constant steam of incoming data. In high-volume environments, that information/data arrives at incredible rates, yet still needs to be stored and analyzed.

Less than a dozen years ago, it was nearly impossible to analyze and process those large chunks of data using commodity hardware. Today, Hadoop clusters built from thousands of nodes are almost everywhere to analyze petabytes of historical data.

Apache Hadoop is a software framework that is being adopted by many enterprises as a cost-effective analytics platform for Big Data analytics. Hadoop is an ecosystem of several services rather than a single product, and is designed for storing and processing petabytes of data in a linear scale-out model. Each service in the Hadoop ecosystem may use different technologies in the processing pipeline to ingest, store, process and visualize data.

What’s next After Big Data?

What Hadoop Can’t Do

The big data movement was pretty much driven by the demand for scale in volume, variety and velocity of data. This is often supported by data that is generated at high speeds, such as financial ticker data, click-stream data, sensor data or log aggregation. Certainly, Apache Hadoop is one of the best technologies to analyze all those large amount of data.

However, the technology has a limitation as it cannot be used when the data is moving or in motion. Some of the areas where the use of Hadoop is not recommended are transactional data. Transactional data, by its very nature, is highly complex, as a transaction on an ecommerce site can generate many steps that all have to be implemented quickly. That scenario is not at all ideal for Hadoop.

Nor would it be optimal for structured data sets that require very minimal latency, like when a Web site is served up by a MySQL database in a typical LAMP stack. That’s a speed requirement that Hadoop would poorly serve.

Fast Data – Analyze data when they are in motion or in real-time

SMACK stands for Spark, Mesos, Akka, Cassandra and Kafka – a combination that’s being adopted for ‘fast data’.

The SMACK stack (Spark, Mesos, Akka, Cassandra and Kafka) is known to be as the ideal platform for constructing “fast data” applications. The term fast data underlines how big data applications and architectures are evolving to be stream oriented, so that the data is extracted as quickly as possible from incoming data, while still supporting traditional data scenarios such as batch processing, data warehousing, and interactive exploration.

The SMACK Stack

The SMACK Stack architecture pattern consists of following technologies:

- Apache Spark (Processing Engine) – Fast and general engine for distributed, large-scale data processing. Often, used to implement ETL, queries, aggregations and applications of machine learning etc. Spark is well-suited to machine learning algorithms, and exposes its API in Scala, Python, R and Java. The approach of Spark is to provide a unified interface that can be used to mix SQL queries, machine learning, graph analysis, and streaming (micro-batched) processing.

- Apache Mesos (The Container) – A flexible cluster resource management system that provides efficient resource isolation and sharing across distributed applications. Mesos uses a two-level scheduling mechanism where resource offers are made to frameworks (applications that run on top of Mesos).The Mesos master node decides how many resources to offer each framework, while each framework determines the resources it accepts and what application to execute on those resources. This method of resource allocation allows near-optimal data locality when sharing a cluster of nodes amongst diverse frameworks.

- Akka (The Model) – Microservice development with high scalability, durability, and low-latency processing. A toolkit and runtime for building highly concurrent, distributed, and resilient message-driven applications on the JVM (Java Virtual Machine).The actor model in computer science is a mathematical model of concurrent computation that treats “actors” as the universal primitives of concurrent computation: in response to a message that it receives, an actor can make local decisions, create more actors, send more messages, and determine how to respond to the next message received.

- Apache Cassandra (The Storage) – Scalable, resilient, distributed database designed for persistent, durable storage across multiple data centers. Most environments will also use a distributed file system like HDFS or S3.

- Apache Kafka (The Broker) – A high-throughput, low-latency distributed messaging system designed for handling real-time data feeds. In Kafka, data streams are partitioned and spread over a cluster of machines to allow data streams larger than the capability of any single machine and to allow clusters of coordinated consumers.

The SMACK stack helps in simplifying the processing of both real-time and batch workloads as single data stream in real or near real time.

SMACK stack: How to put the pieces together

Capturing value in fast data

The best way to capture the value of incoming data is to react to it the instant it arrives. Processing of incoming data in batches always takes lot of time which ultimately takes away the value of that data.

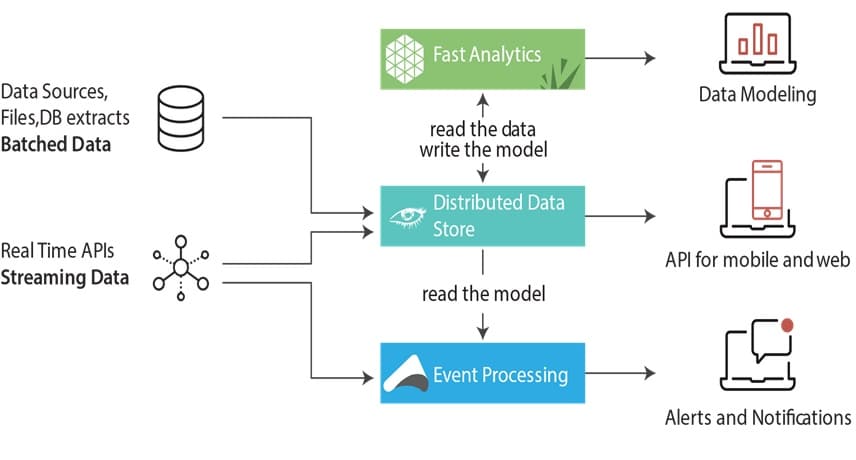

Basically, to handle or process data which is arriving at thousands to millions of events per second, two technologies are required i.e. a streaming system capable of delivering events as fast as they come in and a data store capable of processing each item as fast as it arrives.

Delivering the fast data

In order to deliver fast data, two popular streaming systems i.e. Apache Storm and Apache Kafka have evolved in the market over the past few years. Apache Storm (developed by Twitter) reliably processes unbounded streams of data at rates of millions of messages per second.

On the other hand, Kafka (developed by LinkedIn), is a high-throughput distributed message queue system. Both streaming systems address the need of processing fast data. Kafka, however, stands apart.

Processing the fast data

It is noteworthy that Hadoop MapReduce has very well solved the batch processing needs of customers across the industries. However, the introduction of more flexible developed big data tools for fast data processing paved the demand of big data darling Apache Spark.

Owing to its fast data processing power, the technology is setting fire across the entire big data world. The technology has actually taken over Hadoop in terms of fast data processing of interactive data mining algorithms and iterative machine learning algorithms.

Lambda Architecture is data processing architecture and has both stream and batch processing capabilities. However, most of the lambdas solutions cannot serve the two needs at the same time:

Handles a massive data stream in real time.

Handles different and multiple data models from multiple data source.

To easily meet both of these needs, we have Apache Spark which is mainly responsible for real-time analysis of both recent and historical information. The analysis results are often persisted in Apache Cassandra which helps the user to extract the real-time information anytime in case of failure.

Spark is increasingly adopted as an alternate processing framework to MapReduce, due to its ability to speed up batch, interactive and streaming analytics. Spark enables new analytics use cases like machine learning and graph analysis with its rich and easy to use programming libraries.